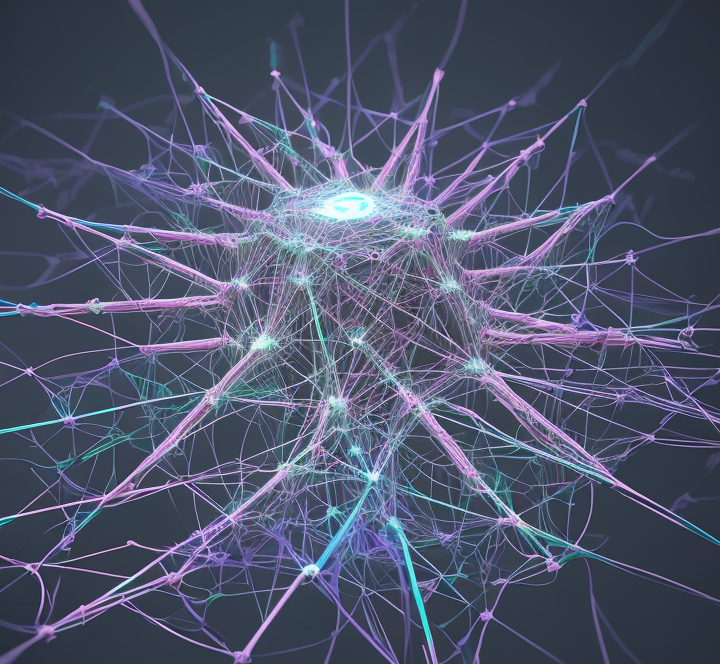

Hello, ML enthusiasts! 🚀🤖 We analyzed rotational equilibria in our latest work, ROTATIONAL EQUILIBRIUM: HOW WEIGHT DECAY BALANCES LEARNING ACROSS NEURAL NETWORKS

💡 Our Findings: Balanced average rotational updates (effective learning rate) across all network components may play a key role in the effectiveness of AdamW.

🔗 ROTATIONAL EQUILIBRIUM: HOW WEIGHT DECAY BALANCES LEARNING ACROSS NEURAL NETWORKS

Looking forward to hearing your thoughts! Let’s discuss more about this fascinating topic together!

Please explain like I’m a 5 years old.

Maybe I understand the following :

(my apologies if this is grossly simplified and doesn’t help)1- Better neural network need to contain more (stacked) layers.

2- input layer at one end of the stack is exposed to messy informations from the real world.

3- at the other end the output layer provide results from the network.

4- the first step for making this work is the training of the network during which training, learning is done.

5- instabilities and stagnation in some layers often occur when learning does not occur in an optimal way. This problem increases exponentially with the number of layers.

6- here learning is done all at once to all the layers. Something called rotation which I don’t understand, is used to stabilize and optimize the learning.I feel this is very different from human learning where it happens in stages : we first learn words, then try to assemble them to form simple sentences, then evolve to make sense of more complex notions and so on. I wish this approach could apply also in artificial intelligence development.

The human brain isn’t a blank slate when it comes into existence. There are already structures that are designed to do certain things. These structures come “pre trained” and a lot of the learning humans do is more akin to the fine tuning that we do for foundation models.

P.S. : see also :

https://en.m.wikipedia.org/wiki/Stochastic_gradient_descent#Adam